The Two Systems

What's in this lesson

Focuses on systems designed for deeper reasoning through chain-of-thought evolution, tree-of-thought reasoning, self-reflection mechanisms, deliberative inference, and multi-step planning architectures.

Why this matters (WIIFM)

Understanding these advanced architectures shifts your perspective from seeing AI as a simple text-generator to treating it as a complex reasoning agent capable of profound problem-solving.

Psychologist Daniel Kahneman famously described human thought in two systems: System 1 (fast, intuitive) and System 2 (slow, deliberate).

Early LLMs acted purely as System 1—rapidly predicting the next word. Today's AI architectures are unlocking System 2, spending more compute at inference time to 'think' before they speak.

What is Reasoning-Centric AI?

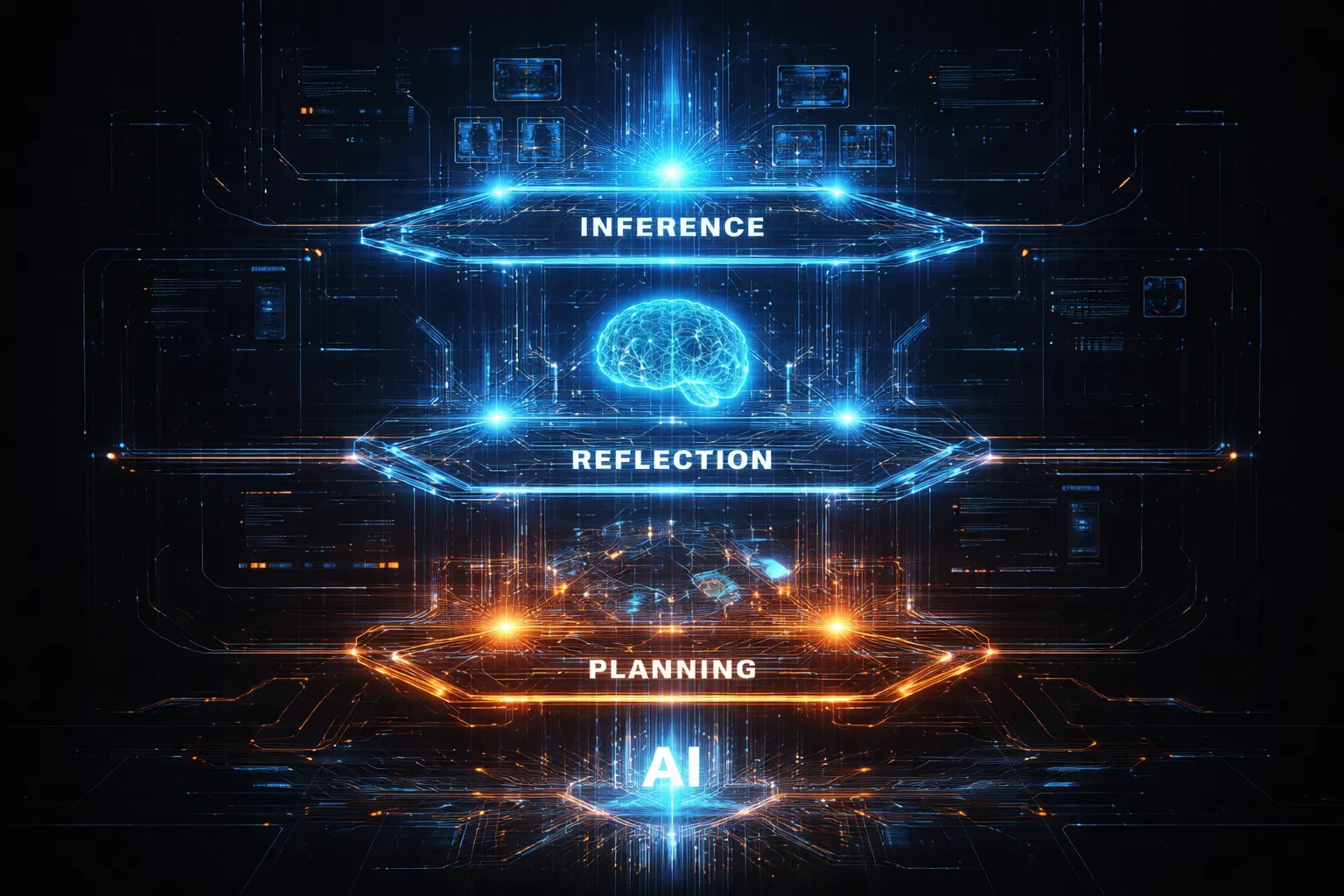

Reasoning-centric AI moves beyond flat text generation into a dynamic, multi-layered architecture designed specifically for deliberative inference.

Click a hotspot to explore the architecture modules.

Chain-of-Thought (CoT) Evolution

Chain-of-Thought (CoT) prompting fundamentally changed how LLMs process logic. By generating intermediate textual steps, the model unlocks vastly more compute dedicated to arriving at the right answer.

Knowledge Check

What is the primary difference between basic prompting and Chain-of-Thought (CoT)?

The Limitations of CoT

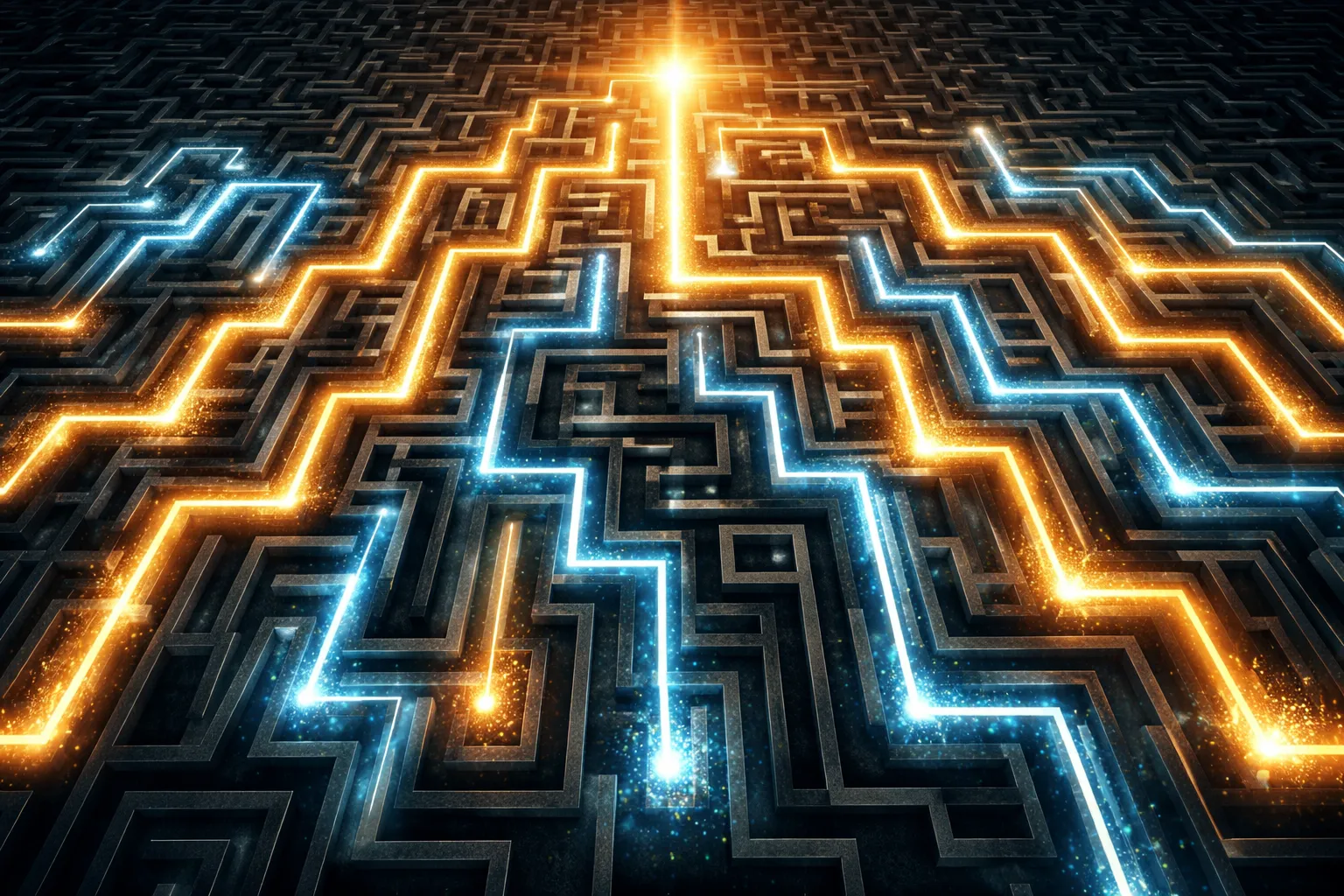

While Chain-of-Thought works beautifully for linear logic, it collapses when a problem requires branching exploration or backtracking.

If an AI realizes it went down the wrong path at step 5, it cannot erase steps 2-4. It must forge ahead, often leading to confident hallucinations.

Tree-of-Thought (ToT) Architecture

Tree-of-Thought (ToT) fixes the linear limitation by treating problem-solving as a search across a vast tree of possibilities, enabling the AI to look ahead, backtrack, and evaluate multiple paths simultaneously.

1. Thought Generator

Rather than one answer, the system asks the LLM to generate 3-5 distinct possible next steps.

2. State Evaluator

The LLM then acts as a judge, scoring each generated branch (e.g., "sure/likely/impossible") to see if it brings the system closer to the goal.

3. Search Algorithm

A classic search algorithm traverses these branches, pruning the dead ends and pursuing the highest-scoring thoughts.

Search Strategies in ToT

To navigate the 'Tree of Thoughts', reasoning systems rely on classic algorithmic search strategies adapted for semantic text spaces.

Knowledge Check

How does Tree-of-Thought (ToT) solve the 'error propagation' problem found in linear Chain-of-Thought (CoT)?

Self-Reflection Mechanisms

Another powerful architectural pattern is Reflexion. Here, the AI acts as its own critic, looking back at its output, identifying flaws, and iteratively refining it without human intervention.

Deliberative Inference (System 2)

The ultimate goal of reasoning AI is scaling 'test-time compute'. Instead of rushing to answer in 0.5 seconds, we allow the AI to compute for 30 seconds, generating thousands of unseen internal tokens to plan its response perfectly.

Multi-Step Planning Agents

Combining reasoning with action creates Agents. The most famous architecture is ReAct (Reasoning and Acting), which forces the AI into a strict Thought -> Action -> Observation loop.

Task Decomposition

For highly complex goals, reasoning architectures utilize Task Decomposition: shattering a massive problem into perfectly organized, solvable sub-tasks before writing a single line of the final output.

Scenario: The user asks the AI to "Build a full-stack e-commerce app." Choose an approach:

Knowledge Check

In the ReAct (Reasoning and Acting) loop, what is the direct outcome of an 'Action'?

Key Takeaways

- System 1 vs System 2: Modern AI is shifting from fast, intuitive next-token prediction to slow, deliberative inference at test-time.

- Chain-of-Thought (CoT): Forces step-by-step reasoning but suffers from linear error compounding.

- Tree-of-Thought (ToT): Enables search algorithms over multiple possible reasoning paths, allowing the AI to backtrack and evaluate alternatives.

- Self-Reflection: Architectures like Reflexion allow the model to critique and correct its own intermediate outputs before finalizing them.

- Agents & Decomposition: ReAct loops and task decomposition allow AI to interact with external tools safely and manage vast, complex goals.

Assessment

You have reached the end of the tutorial. The following 5 questions will evaluate your understanding of reasoning-centric architectures.

You need to score at least 80% to earn your certificate. Your progress is saved automatically.

Question 1 of 5

Which architecture explicitly solves complex logic by exploring multiple reasoning paths simultaneously and pruning unpromising branches?

Question 2 of 5

What is the primary purpose of self-reflection mechanisms (like Reflexion) in reasoning AI?

Question 3 of 5

In a multi-step planning agent utilizing the ReAct framework, what typically follows an 'Action'?

Question 4 of 5

What is the critical weakness that causes traditional Chain-of-Thought (CoT) to fail on highly complex, branching logic puzzles?

Question 5 of 5

Which of the following best describes the concept of 'deliberative inference' (System 2 AI)?